Projects

"Hi AirStar, Guide Me to the Badminton Court."

UAV multifunctional system

AirStar transforms drones into smart aerial companions by integrating a large language model as its cognitive core, enabling natural voice and gesture control for intuitive interaction. It combines geospatial navigation with real-time reasoning for precise movement, while offering advanced features like question answering, automated filming, and target tracking. Designed for extensibility, AirStar paves the way for next-gen, instruction-driven UAV assistants.

UAV-Flow Colosseo: A Real-World Benchmark for Flying-on-a-Word UAV Imitation Learning

Short-rang fine-grained navigation

UAV-Flow consists of a large-scale real-world dataset for language-conditioned UAV imitation learning, featuring multiple UAV platforms, diverse environments, and a wide range of fine-grained flight skill tasks. To enable systematic experimental analysis under the Flow task setting, we additionally provide a simulation-based evaluation protocol and deploy VLA models on real UAVs. To the best of our knowledge, this is the first real-world deployment of VLA models for language-guided UAV control in open environments.

AeroDuo: Aerial Duo for UAV-based Vision and Language Navigation

Long-range planning navigation

We present DuAl-VLN, a novel framework where two UAVs collaborate at different altitudes—a high-altitude UAV for global reasoning and a low-altitude UAV for precise navigation—enabling efficient, autonomous flight guided by natural language. Powered by the HaL-13k dataset and our AeroDuo system, which combines a multimodal Pilot-LLM with lightweight navigation policies, this approach achieves a 9.71% higher success rate than single-UAV methods, demonstrating the power of collaborative aerial intelligence.

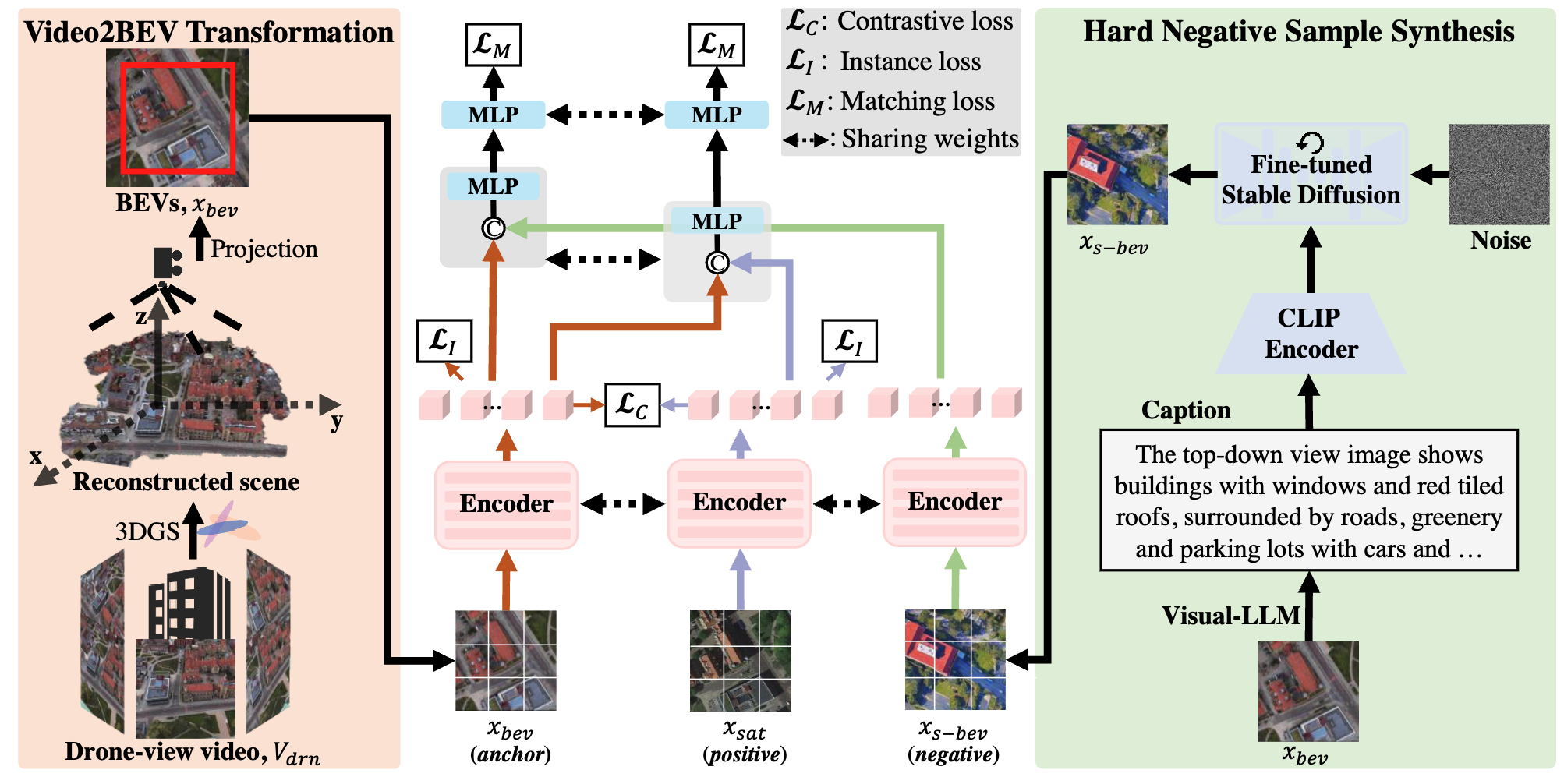

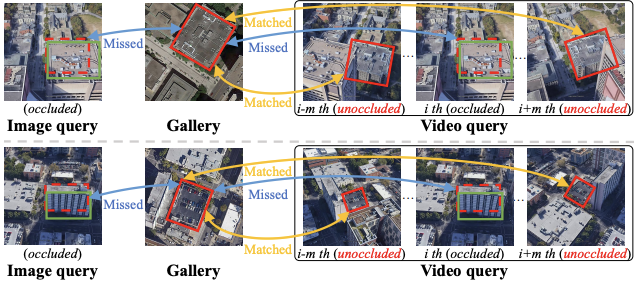

Video2BEV: Transforming Drone Videos to BEVs for Video-based Geo-localization

Perception and Geo-localization

We present Video2BEV, a breakthrough approach that redefines drone geo-localization by converting drone videos into detailed Bird’s Eye View (BEV) maps for precise location matching. Unlike single-image methods, our Gaussian Splatting-based 3D reconstruction preserves fine-grained details without distortion, while a diffusion-powered hard negative sampling module enhances model adaptability. Tested on our new UniV dataset, featuring high-frame-rate drone flights, Video2BEV outperforms traditional methods—especially in low-altitude, occlusion-heavy scenarios—setting a new standard for robust, video-based geo-localization.

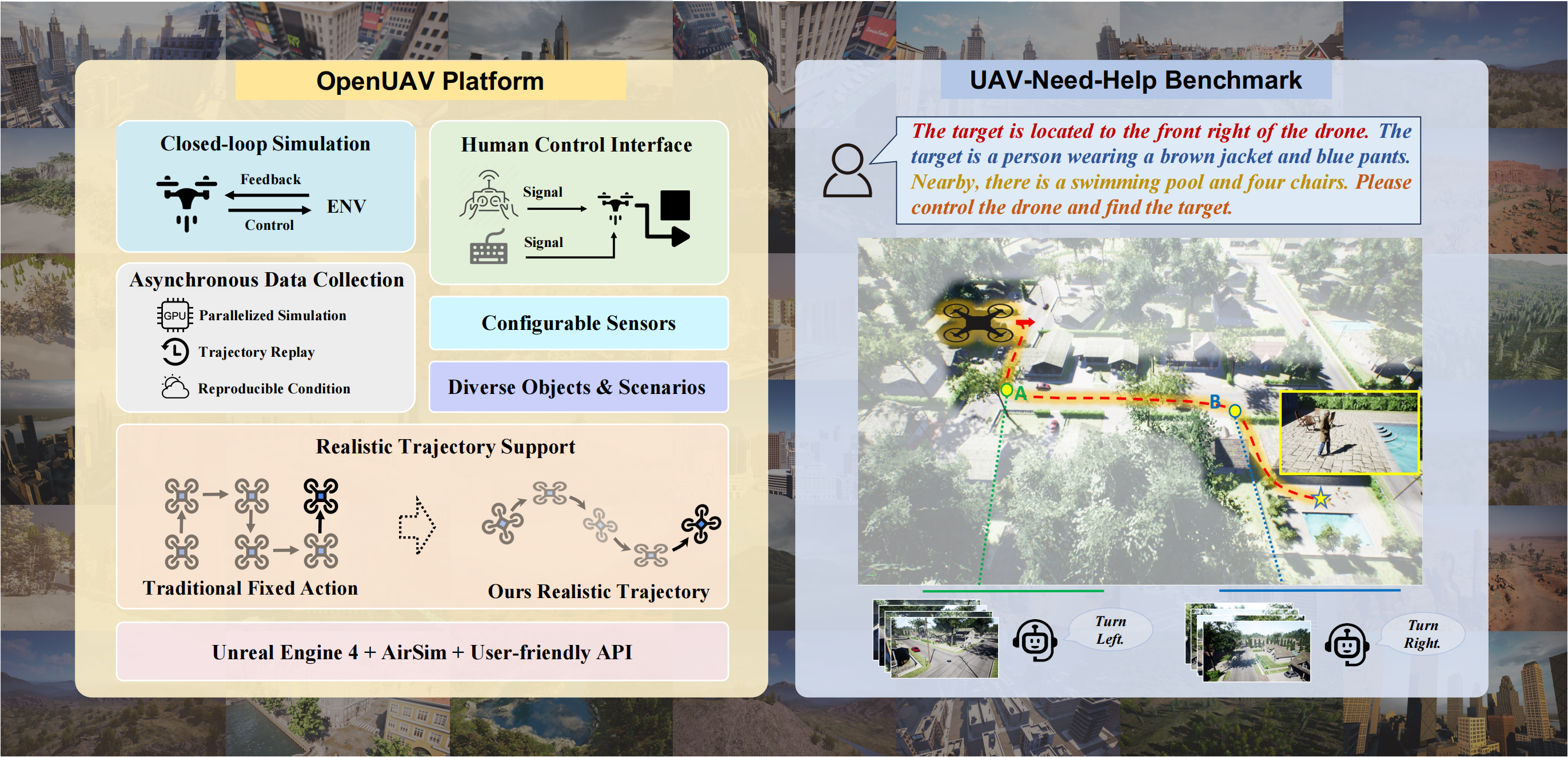

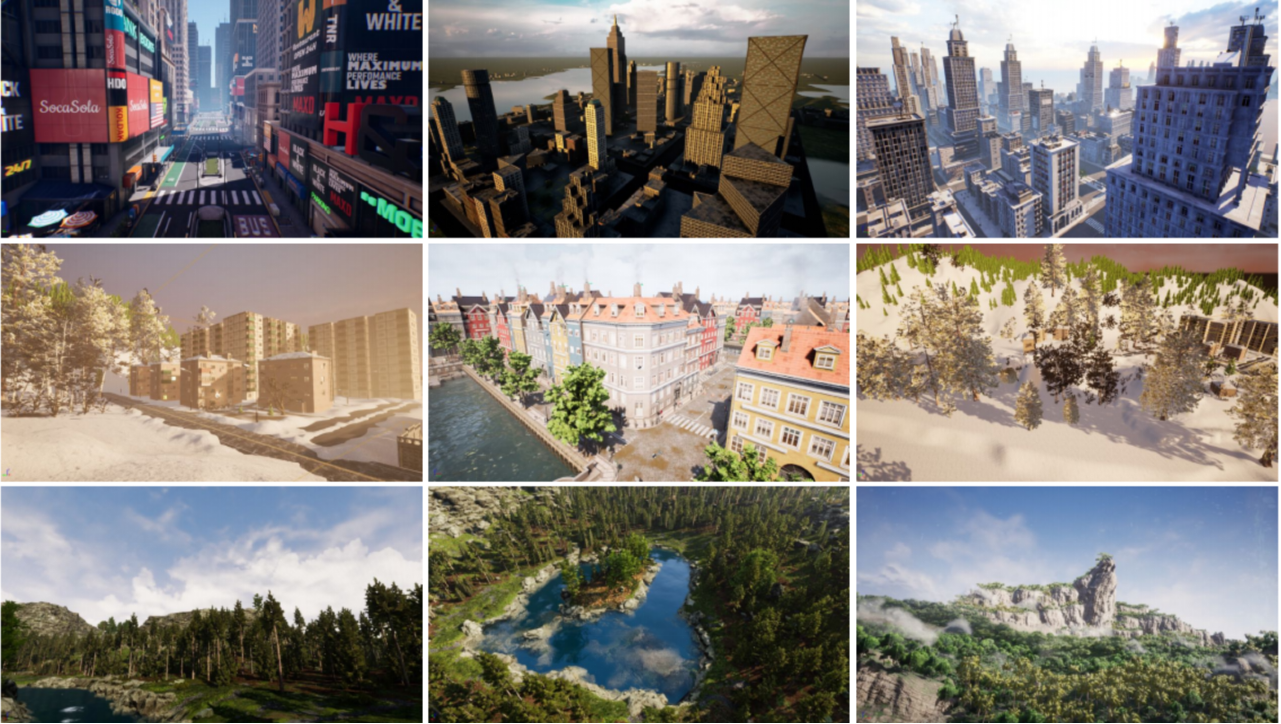

Towards Realistic UAV Vision-Language Navigation: Platform, Benchmark, and Methodology

UAV Simulation Platform

We propose a realistic UAV simulation platform and a novel UAV-Need-Help benchmark. The OpenUAV platform focuses on realistic UAV vision-language navigation tasks, integrating diverse environmental components, realistic flight simulations, and algorithmic support. The UAV-Need-Help benchmark introduces an assistant-guided UAV object search task, where the UAV navigates to a target object using object descriptions, environmental information, and guidance from assistants.

Our Team

Prof. Si Liu

Prof. Linjiang Huang

Yue Liao

Shaofei Huang

Jinyu Chen

Ziqin Wang

Xiangyu Wang

Donglin Yang

Ruipu Wu

Yige Zhang

Qinan Liao

Xiangyi Zheng

Wenhao Zheng